- Introduction

- Setting up your account

- Balance

- Clusters

- Concept drift

- Coverage

- Datasets

- General fields

- Labels (predictions, confidence levels, label hierarchy, and label sentiment)

- Models

- Streams

- Model Rating

- Projects

- Precision

- Recall

- Annotated and unannotated messages

- Extraction Fields

- Sources

- Taxonomies

- Training

- True and false positive and negative predictions

- Validation

- Messages

- Access control and administration

- Manage sources and datasets

- Understanding the data structure and permissions

- Creating or deleting a data source in the GUI

- Preparing data for .CSV upload

- Uploading a CSV file into a source

- Creating a dataset

- Multilingual sources and datasets

- Enabling sentiment on a dataset

- Amending dataset settings

- Deleting a message

- Deleting a dataset

- Exporting a dataset

- Using Exchange integrations

- Model training and maintenance

- Understanding labels, general fields, and metadata

- Label hierarchy and best practices

- Comparing analytics and automation use cases

- Turning your objectives into labels

- Overview of the model training process

- Generative Annotation

- Dastaset status

- Model training and annotating best practice

- Training with label sentiment analysis enabled

- Understanding data requirements

- Train

- Introduction to Refine

- Precision and recall explained

- Precision and Recall

- How validation works

- Understanding and improving model performance

- Reasons for label low average precision

- Training using Check label and Missed label

- Training using Teach label (Refine)

- Training using Search (Refine)

- Understanding and increasing coverage

- Improving Balance and using Rebalance

- When to stop training your model

- Using general fields

- Generative extraction

- Using analytics and monitoring

- Automations and Communications Mining™

- Selecting label confidence thresholds

- Creating a stream

- Updating or deleting a stream

- Developer

- Uploading data

- Downloading data

- Exchange Integration with Azure service user

- Exchange Integration with Azure Application Authentication

- Exchange Integration with Azure Application Authentication and Graph

- Migration Guide: Exchange Web Services (EWS) to Microsoft Graph API

- Fetching data for Tableau with Python

- Elasticsearch integration

- General field extraction

- Self-hosted Exchange integration

- UiPath® Automation Framework

- UiPath® official activities

- How machines learn to understand words: a guide to embeddings in NLP

- Prompt-based learning with Transformers

- Efficient Transformers II: knowledge distillation & fine-tuning

- Efficient Transformers I: attention mechanisms

- Deep hierarchical unsupervised intent modelling: getting value without training data

- Fixing annotating bias with Communications Mining™

- Active learning: better ML models in less time

- It's all in the numbers - assessing model performance with metrics

- Why model validation is important

- Comparing Communications Mining™ and Google AutoML for conversational data intelligence

- Licensing

- FAQs and more

Communications Mining user guide

Selecting label confidence thresholds

Introduction

The platform is typically used in one of the first steps of an automated process: ingesting, interpreting and structuring an inbound communication, such as a customer email, much like a human would do when that email arrived in their inbox.

When the platform predicts which labels or tags apply to a communication, it assigns each prediction a confidence score (%) to show how confident it is that the label applies.

If these predictions are to be used to automatically classify the communication, however, there needs to be a binary decision, in other words, if this label applies or not. This is where confidence thresholds come in.

A confidence threshold is the confidence score (%) at or above which an RPA bot or other automation service will take the prediction from the platform as a binary Yes, this label does apply, and below which it will take the prediction as a binary No, this label does not apply.

Make sure you understand confidence thresholds and how to select the appropriate one, in order to achieve the right balance of precision and recall for that label.

Selecting a threshold for a label

- To select a threshold for a label, proceed as follows:

- Navigate to the Validation page, and select the label from the label filter bar.

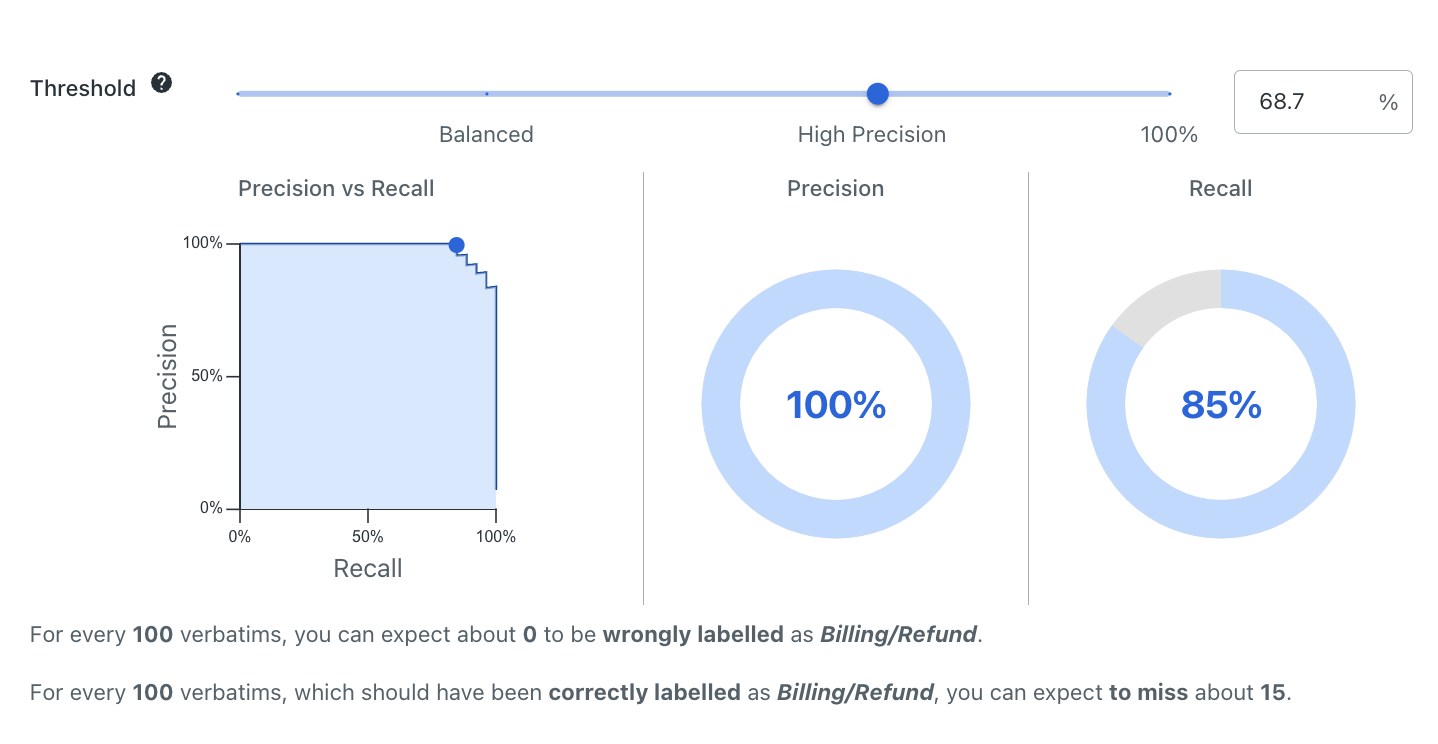

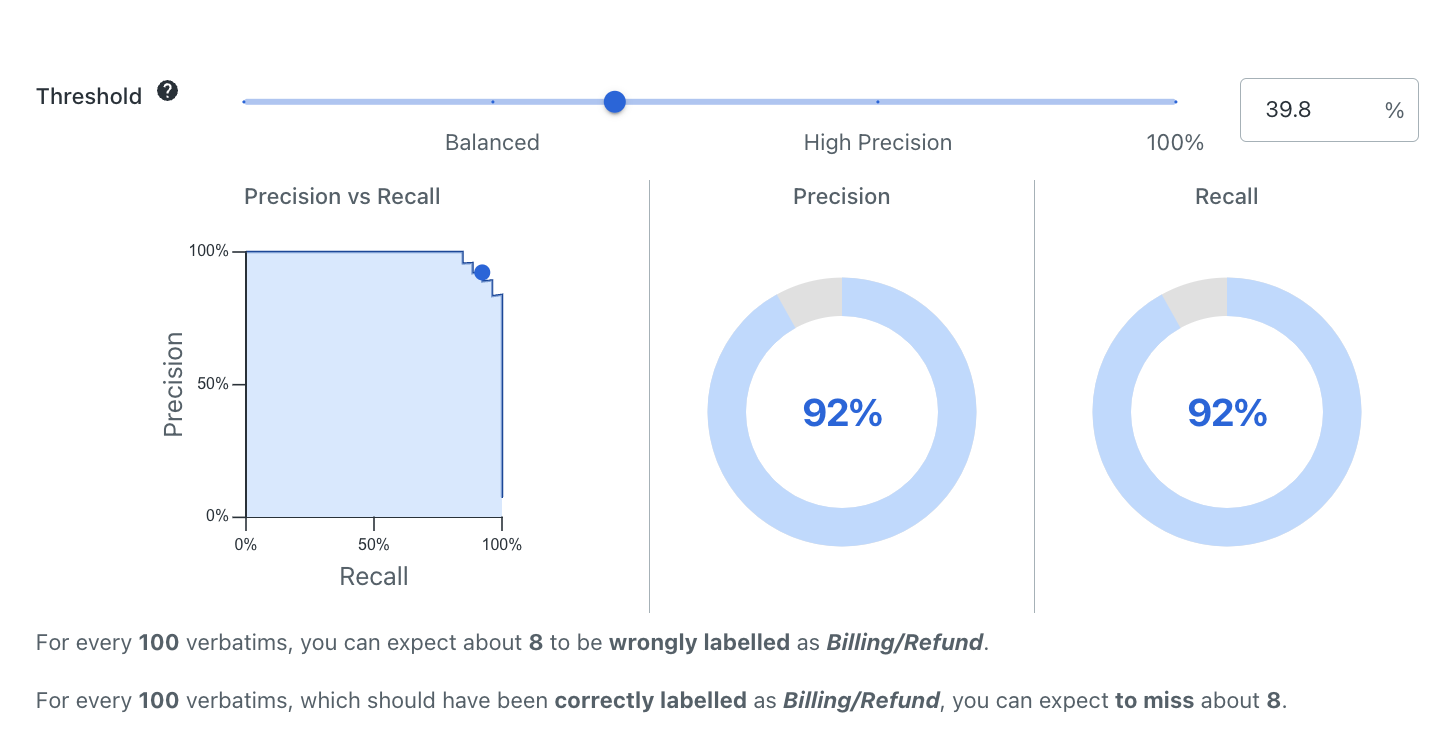

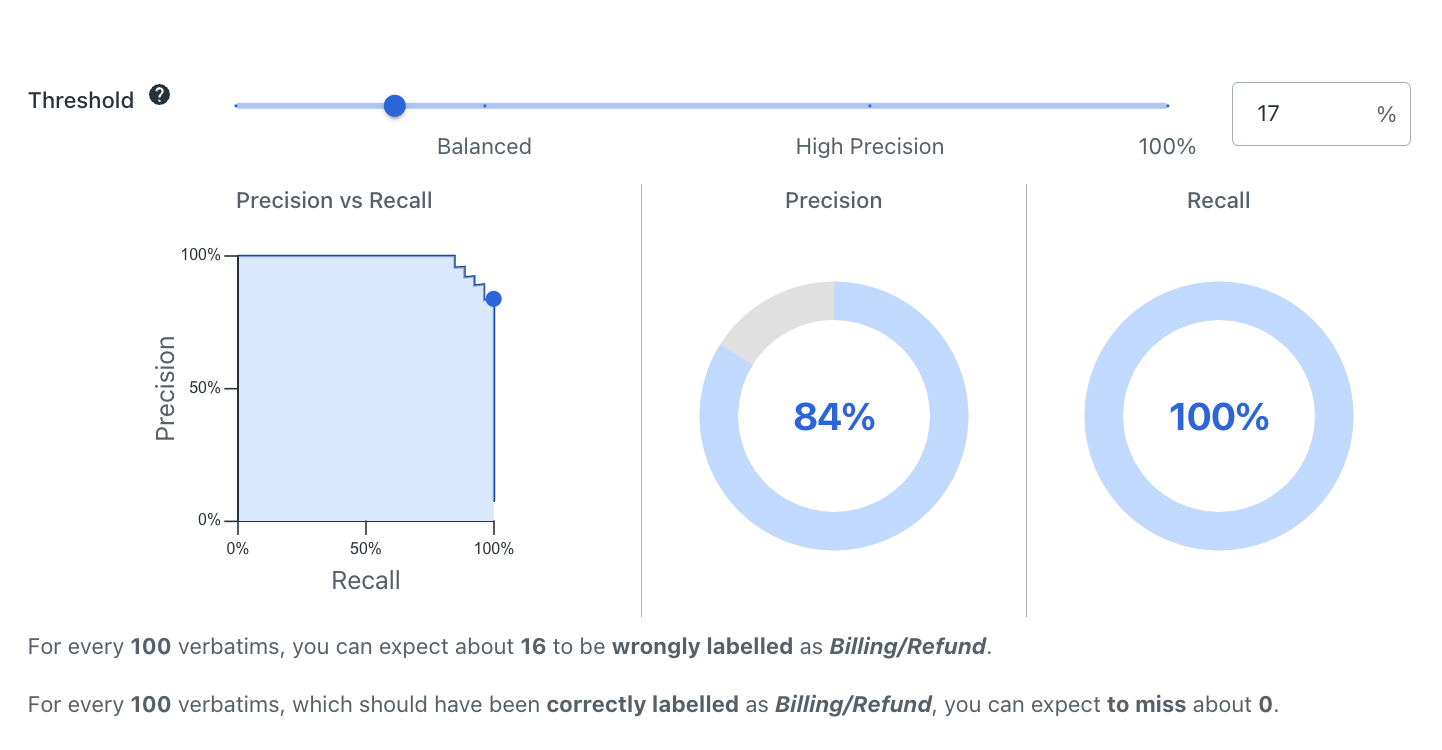

- Drag the threshold slider, or type a % figure into the box as shown in the following image, to view the different precision and recall statistics that would be achieved for that threshold

- The precision versus recall chart gives you a visual indication of the confidence thresholds that would maximise precision or recall, or provide a balance between the two:

- In Figure 1, the confidence threshold selected (68.7%) would maximise precision (100%), that is, the platform should typically get no predictions wrong at this threshold, but would have a lower recall value (85%) as a result.

- In Figure 2, the confidence threshold selected (39.8%) provides a good balance between precision and recall (both 92%).

- In Figure 3, the confidence threshold selected (17%) would maximise recall (100%), that is, the platform should identify every instance where this label should apply, but would have a lower precision value (84%) as a result.

Figure 1. Label validation with confidence threshold set at 68.7%. Label validation with confidence threshold set at 68.7%

Figure 2. Label validation with confidence threshold set at 39.8%. Label validation with confidence threshold set at 39.8%

Figure 3. Label validation with confidence threshold set at 17%. Label validation with confidence threshold set at 17%

Choosing the right threshold

Depending on your use case and the specific label in question, you might want to maximize either precision or recall, or find the threshold that gives the best possible balance of both.

When thinking about what threshold is required, think about potential outcomes, such as what the potential cost or consequence to your business is if a label is incorrectly applied or missed.

For each label your threshold should be chosen based on the better outcome for the business if something goes wrong - i.e. something is incorrectly classified (a false positive), or something is missed (a false negative).

For example, if you wanted to automatically classify inbound communications in different categories, but also had a label for Urgent that routed requests to a high-priority work queue, you might want to maximise the recall for this label to ensure that no urgent requests are missed, and accept a lower precision as result. This is because it may not be very detrimental to the business to have some less urgent requests put into the priority queue, but it could be very detrimental to the business to miss an urgent request that is time sensitive.

As another example, if you were automating a type of request end-to-end that was some form of monetary transaction or was of high-value, you would likely choose a threshold that maximised precision, so as to only automate end-to-end the transactions the platform was most confident about. Predictions with confidences below the threshold would then be manually reviewed. This is because the cost of a wrong prediction (a false positive) is potentially very high if a transaction is then processed incorrectly.

Auto thresholds

Once a label has been sufficiently trained, Communications Mining™ automatically suggests thresholds for you, which include the following:

- High Recall: maximizes the recall of your label while maintaining reasonable model precision.

- High Precision: maximizes the precision of your label with minimal compromise on the recall of the model.

- Balanced: strikes an even balance between precision and recall, without prioritizing one over the other.